What’s reality? I don’t know. When my bird was looking at my computer monitor I thought, ‘That bird has no idea what he’s looking at.’ And yet what does the bird do? Does he panic? No, he can’t really panic, he just does the best he can. Is he able to live in a world where he’s so ignorant? Well, he doesn’t really have a choice. The bird is okay even though he doesn’t understand the world. You’re that bird looking at the monitor, and you’re thinking to yourself, ‘I can figure this out.’ Maybe you have some bird ideas. Maybe that’s the best you can do.

— Terry A. Davis

-

Better ways to type on a <5% Keyboard

When was the last time you enjoyed typing down what you wanted on a TV with nothing but a remote? All the solutions to speeden up text input on such devices are mostly a voice recognision alternative, or something like a gaze-based detection of input.

Is there a way to make typing efficient on a consumer remote that has nothing more than navigation buttons?

Theoretically how could you possibly reduce the number of keystrokes to type a single character while still maintaining the keystrokes/available-keys ratio, while still not going up high the learning curve? Employing a decision-tree based input method might help here.

For the sake of simplicity, let us assume that we still retain our existing QWERTY keyboard with 4 rows, (alphabets and numbers, symbols excluded). And that the first keystroke is spent to select one among those 4 rows.

If we allow a maximum choice of 12 inputs from a single row, that covers most of the punctuation used in English—

:;<,>.?/_-+={}[]"'?/. 12, because it is a factor of 4, and each of the arrow keys could easily be assigned a equal number of characters.So the second input narrows the selection down to 3 adjacent characters, and the third input could finalize the choice of the key pressed. Sounds good, but how well does this fare?

By the standards of the metric we just invented (totally scientific!), we map 45 characters to 4 keys to a combination of 3 keystrokes.

We want efficiency to decrease if either:

- The average keystrokes per character is high

- The keyboard is too small for the number of characters

For our case, we could assume that the average number of keystrokes is a function of base-k logarithm, where k is the total number of keys in the keyboard.

\[[ K_\text{avg} = \lceil \log_{N_\text{keys}} N_\text{chars} \rceil ]\]So it could mean a keyboard like this, although there’s a lot of room for improvement in terms of the overlay:

Comparison to TV input

It is not trivial to calculate the number of keystrokes required on the normal modes of input, like the one that’s used in most modern televisions, but it could be approximated based on the following assumptions:

- Diagonal movement not allowed, only 4 directional

- QWERTY keyboard is used

- The keyboard position remains at the point of last input

Based on the above assumptions, a Python script could be written to calculate the keystrokes taken on a TV input:

from collections import deque layout = [ list("1234567890"), list("qwertyuiop"), list("asdfghjkl"), list("zxcvbnm") ] # build position map pos = {} for r in range(len(layout)): for c in range(len(layout[r])): pos[layout[r][c]] = (r, c) def bfs_distance(a, b): """True snap-path cost between two keys""" if a == b: return 0 sr, sc = pos[a] tr, tc = pos[b] q = deque([(sr, sc, 0)]) visited = set() while q: r, c, d = q.popleft() if (r, c) == (tr, tc): return d if (r, c) in visited: continue visited.add((r, c)) for dr, dc in [(-1,0),(1,0),(0,-1),(0,1)]: nr, nc = r + dr, c + dc if 0 <= nr < len(layout) and 0 <= nc < len(layout[nr]): if layout[nr][nc] != " ": q.append((nr, nc, d + 1)) return float("inf") def keystrokes_tv(text, start='a'): text = text.lower() current = start total = 0 for ch in text: if ch not in pos: continue total += bfs_distance(current, ch) total += 1 current = ch return totalBeing at a loss for a potential dataset to compare the new decision-tree based system to the existing methods, I instead compare both of them with the amount of keystrokes required to type out some of the most popular YouTube searches from the previous decade. The efficiency of the new method is very obvious with longer queries, although this would not be that useful since it is only needed to type out the first few characters of a word with the advent of predictive completion system.

Notable difference still exist at smaller words:

Search Term Keystrokes New Algorithm Percentage Decrease BTS 14 9 35.71% pewdiepie 54 27 50.00% ASMR 16 12 25.00% Billie Eilish 51 39 23.53% baby shark 50 30 40.00% old town road 67 39 41.79% music 34 15 55.88% badabun 33 21 36.36% blackpink 48 27 43.75% Fortnite 35 24 31.43% Minecraft 42 27 35.71% pewdiepie vs t series 91 63 30.77% peliculas completas en español 156 90 42.31% senorita 38 24 36.84% Ariana grande 61 39 36.07% alan walker 56 33 41.07% tik tok 25 21 16.00% musica 38 18 52.63% WWE 6 9 -50.00% Calma 29 15 48.28% bad bunny 32 27 15.62% Eminem 33 18 45.45% queen 21 15 28.57% ed Sheeran 33 30 9.09% Peppa pig 58 27 53.45% despacito 50 27 46.00% la rosa de Guadalupe 92 60 34.78% Taki Taki 42 27 35.71% Enes Batur 43 30 30.23% Michael Jackson 86 45 47.67% songs 24 15 37.50% t series 30 24 20.00% maluma 40 18 55.00% bad guy 24 21 12.50% markiplier 46 30 34.78% Taylor swift 54 36 33.33% Ozuna 41 15 63.41% nightcore 44 27 38.64% Paulo Londra 59 36 38.98% karaoke 46 21 54.35% James Charles 69 39 43.48% youtube 30 21 30.00% imagine dragons 75 45 40.00% dance monkey 53 36 32.08% twice 28 15 46.43% Roblox 36 18 50.00% free fire 25 27 -8.00% gacha life 46 30 34.78% post-Malone 63 33 47.62% Justin Bieber 56 39 30.36% Felipe Neto 49 33 32.65% Bruno mars 46 30 34.78% 7 rings 31 21 32.26% china 25 15 40.00% Doraemon 44 24 45.45% anuel 25 15 40.00% kill this love 57 42 26.32% jacksepticeye 72 39 45.83% maroon 5 39 24 38.46% Joe Rogan 44 27 38.64% game of thrones 70 45 35.71% marshmello 51 30 41.18% Linkin park 57 33 42.11% David dobrik 52 36 30.77% bohemian rhapsody 108 51 52.78% squeeze 28 21 25.00% lady gaga 44 27 38.64% aaj tak live 43 36 16.28% 5-minute crafts 56 45 19.64% cardi b 29 21 27.59% geo news live 54 39 27.78% Selena Gomez 62 36 41.94% Coldplay 50 24 52.00% dantdm 27 18 33.33% lofi 24 12 50.00% anuel aa 35 24 31.43% Rihanna 35 21 40.00% drake 26 15 42.31% dross 22 15 31.82% Los polinesios 70 42 40.00% rap 21 9 57.14% Shawn Mendes 48 36 25.00% cocomelon 59 27 54.24% sia 19 9 52.63% song 20 12 40.00% slime 24 15 37.50% dua lipa 43 24 44.19% con Calma 48 27 43.75% funny videos 54 36 33.33% mikecrack 44 27 38.64% vegetta777 38 30 21.05% pubg 22 12 45.45% avengers endgame 66 48 27.27% movies 37 18 51.35% soolking 26 24 7.69% believer 38 24 36.84% GTA 5 20 15 25.00% Romeo Santos 66 36 45.45% Katy perry 43 30 30.23% The code used for these calculations can be found here.

-

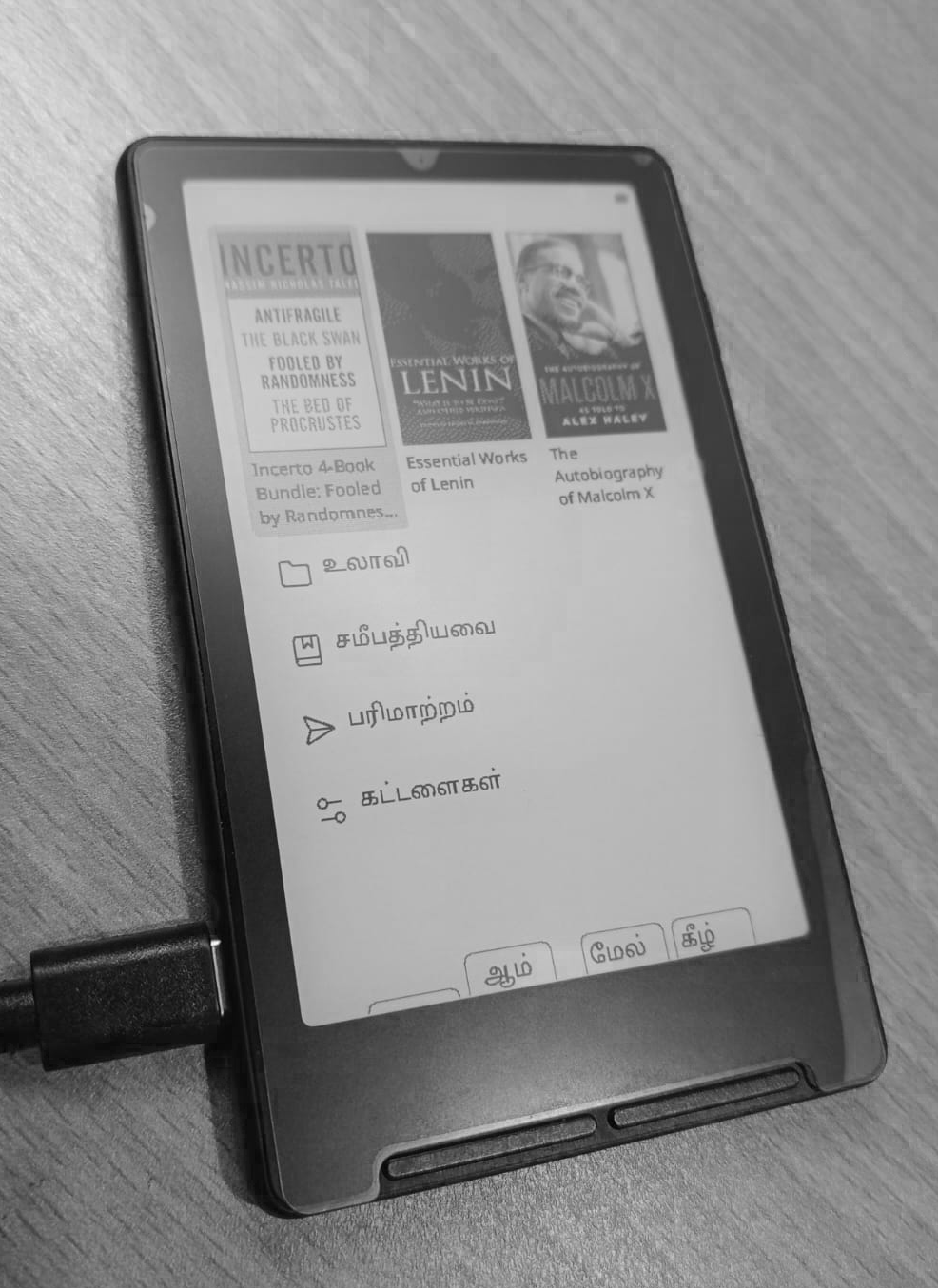

Tamil support for the Xteink X4 reader

Or how on earth do you render Tamil characters without a shaping engine?

I recently bought the Xteink X4 e-reader and I must say I am impressed by what it delivers, despite its hardware limitations. It runs on a 32bit ESP32-C3, with only about 400kb of SRAM and 16MB of flash. When dealing with a system that small, it may not be ideal to expect i18n features that parallel other contemporary devices, but it has proven itself to be great at what it does, supporting EPUB files of most languages with the Latin and Cyrillic scripts, with the addition of an community developed firmware1.

So adding support for Tamil characters should be as easy as sideloading a Tamil font and that should be it, right?2 Except it’s not that simple.

The Tamil writing system falls under what’s called a ‘abugida’3 system: each unit consisting of a consonent-vowel sequence. So although there are around a 247 characters in the language, they can be represented within the 12 vowels and 18 consonants, or a combination of them.

Unicode follows the same, it doesn’t include all possible characters that is used in Tamil, instead using a shorter subset of the characters which fall within the range U+0B82 and U+0BCD (about 75 characters), to represent every character used in modern usage.

For example: The character for Tea (டீ) is actually composed of two unicode characters -

டand◌ீ. This is not always left-to-right: The character Pay (பே) also consists of two characters, but the second input character (ே) precedes the first (ப) in the ligature.Keeping these things in mind, I designed a library to render the Tamil characters in the CrossPoint eReader. The character rendering happens at the system level, so the characters could be rendered in the UI itself as well as within the EPUB opened. The core of the program can be summarised as:

// 3. Above vowel sign (ி ீ) if (aboveVowel != 0) { PositionedGlyph g; g.codepoint = aboveVowel; g.xOffset = 0; g.yOffset = TamilOffset::ABOVE_VOWEL; g.zeroAdvance = false; cluster.glyphs.push_back(g); } // 4. Below vowel sign (ு ூ) if (belowVowel != 0) { PositionedGlyph g; g.codepoint = belowVowel; g.xOffset = 0; g.yOffset = TamilOffset::BELOW_VOWEL; g.zeroAdvance = false; cluster.glyphs.push_back(g); } // 5. Right vowel sign (ா) if (rightVowel != 0) { PositionedGlyph g; g.codepoint = rightVowel; g.xOffset = 0; g.yOffset = 0; g.zeroAdvance = false; cluster.glyphs.push_back(g); } // 6. Right-half of split vowel if (rightSplit != 0) { PositionedGlyph g; g.codepoint = rightSplit; g.xOffset = 0; g.yOffset = 0; g.zeroAdvance = false; cluster.glyphs.push_back(g); } // 7. Virama / pulli (்) — displayed above/after consonant, no advance if (virama != 0) { PositionedGlyph g; g.codepoint = virama; g.xOffset = -1; g.yOffset = TamilOffset::VIRAMA; g.zeroAdvance = true; cluster.glyphs.push_back(g); } // 8. Anusvara (ஂ) — above the consonant if (anusvara != 0) { PositionedGlyph g; g.codepoint = anusvara; g.xOffset = 0; g.yOffset = TamilOffset::ANUSVARA; g.zeroAdvance = true; cluster.glyphs.push_back(g); }That’s right, simply adjusting the offsets based on the character input.

Is this the fix? Almost yes, because this fails to consider the case of the ‘u’ and ‘uu’ vowel signs that are not a simple alignment unlike the other vowel signs. It transforms the character entirely as done in modern text rendering libraries.

-

You could technically do that, but the text become undecipherable out of the box. ↩

-

Representing Money in Writing

This is an excerpt from a book I was reading:

[They] say that the financial value of the bauxite deposits of Orissa alone is 2.27 trillion dollars (twice India’s gross domestic product). That was at 2004 prices. At today’s prices it would be about four trillion dollars. A trillion has twelve zeroes.

This raises a lot of questions: How much is 2.27 trillion in today’s prices? How much is India’s GDP now? What year was the author referring to by “At today’s prices”?

This is a common pattern found in most literature. And it’s annoying enough that it’s better to assume that the reader has some sense of economics, because almost always the target audience has some grasp of the context.

Is there a better way to represent a quantitative measure of money in the past in terms of some present day reference?

The above example is a lot better, because it provides some scale of reference (value compared to the country’s GDP).

What could be employed instead for smaller values of money?1 Is there a better way to express it than to do it through food? Even the Economist compares difference currencies through the Big Mac2. Something like:

“I got a quarter, which was enough to buy a loaf of bread and a bit of meat.”

“Her weekly allowance was two shillings, just enough to cover her meals and a small candle.”

It would also be annoying, to be spoonfed the details of the money that’s being talked about, which is mostly why the explanation is avoided in most cases. That making sense, it is also better if there was a small section in the preface or the introduction.

However we live in the 21st century and there are more modern ways to read text than on printed paper. We could make the values appear dynamic and its content depending on where the reader is from. Example pseudocode:

(async function() { const readerCountry = await API.getReaderCountry(); // e.g., "US" const localCurrency = countryToCurrency[readerCountry] || "USD"; const inrElements = document.querySelectorAll(".inr"); inrElements.forEach(async el => { const inrAmount = parseFloat(el.textContent.replace(/[^\d.]/g,'')); const exchangeRate = await API.getExchangeRate("INR", localCurrency); el.title = `≈ ${ (inrAmount / exchangeRate) } ${localCurrency}`; el.style.borderBottom = "1px dotted"; el.style.cursor = "help"; }); })();So a reader from Europe does not have to worry about how much a thousand rupees is, since his reader gives him a context of how much that is in his local currency. Try hovering over the money mentioned:

His monthly salary was about 10,000 INR

And the currency with which the original amount is referenced to can be changed depending on the user’s location.

-

Will there be another Emacs?

If you had spoken with me at least once, chances are that you would’ve heard of an ‘Emacs’.1

On surface it looks like a text editor, but beneath it is a full layer of an Lisp Interpreter. It may not appear impressive until you realise what it actually means:

You are given the freedom to modify the very environment that you currently use. This means that you could inspect and override every single function and modify the core behaviour at runtime, without the need to ‘restart’ or compile it again.

And Lisp (not to be confused with LISP) languages are very intuitive that anyone can start hacking on it. That was the case for me too, when I was 14. It was the best way for me to get into programming and the enthusiasm I had back then still lives on.

My

.emacs.ddirectory has now exceeded over 10k SLOC:~ ❯ sloc --format=summary ~/.emacs.d/*.el ~/.emacs.d/lisp/*.el ~/.emacs.d/site-lisp/chizu/*.el │ Language │ Files │ % │ Code │ % │ Comment │ % │ ├─────────────┼───────┼───────┼───────┼──────┼─────────┼──────┤ │ EmacsLisp │ 46 │ 98.2 │ 10122 │ 60.6 │ 2051 │ 12.3 │ │ Markdown │ 1 │ 1.8 │ 0 │ 0.0 │ 128 │ 35.8 │ ├─────────────┼───────┼───────┼───────┼──────┼─────────┼──────┤ │ Sum │ 47 │ 100.0 │ 10122 │ 59.3 │ 2179 │ 12.8 │ └─────────────┴───────┴───────┴───────┴──────┴─────────┴──────┘This is excluding the hundreds of community packages I’ve installed, almost all of which comes from other users like me. So many useful packages that you couldn’t have imagined yourself: magit, yasnippet, and much more.

It may not be impressive in the era of fancy IDEs and LLMs that do the job for you, but that totally misses the point: it is not about what it does, more about how it does things that would’ve otherwise been tedious and frustruating.

This blog used to be ran on a handwritten static-site generator before I switched to Jekyll later. I was so impressed that the program even worked, given how inexperienced in programming I was back then. That didn’t matter however, as long as it just worked:

(defun add-entries-to-archive () (with-current-buffer (find-file-noselect "/home/vetrivln/Downloads/public_html/archives.html") (goto-char (point-min)) (search-forward-regexp "<ul>") (delete-region (point) (- (search-forward-regexp "</ul>") 6)) (goto-char (- (point) 6)) (dolist (file (reverse (directory-files "~/Downloads/public_html/articles/" t "^[^_].*.org$" nil))) (let ((date (substring file 42 52)) (file-name (substring file 42 -4)) (title (progn (with-current-buffer (find-file-noselect file) (goto-char (point-min)) (buffer-substring-no-properties 10 (line-end-position)))))) (insert (format "\n<li><div class=\"date\">%s  </div><a href=\"articles/%s.html\"><div class=\"title\">%s</div></a></li>\n" date file-name title)) (search-forward-regexp "</ul>") (goto-char (- (point) 6)) (save-buffer)))))In hindsight this was not the best way to do it, but that does not matter.

Here’s another function that I wrote for an EPUB reading mode, to select one from the many words displayed, query the internet for its meaning and return only the definition from the page retrieved:

(defun find-dict-meaning (word) "Query Merriam Webster for the definition of WORD." (interactive (list (cond ((use-region-p) (buffer-substring-no-properties (region-beginning) (region-end))) (current-prefix-arg (or (thing-at-point 'word t) ;(read-string "word: " (thing-at-point 'word t))) (error "No word to query")) ((save-window-excursion (call-interactively #'avy-goto-word-1) (thing-at-point 'symbol t)))))) (url-retrieve (concat "https://www.merriam-webster.com/dictionary/" (url-hexify-string word)) (lambda (status) (unwind-protect nil (progn (when-let (err (plist-get status :error)) (user-error "Failed to query Merriam Webster: %S %s" err word)) (goto-char (point-min)) (forward-paragraph) ;skip the HTTP header (let* ((dom (libxml-parse-html-region (point) (point-max))) (text (dom-strings (dom-by-class dom "dtText")))) (setq text (mapconcat #'(lambda (text) (propertize text 'face 'italic)) (nbutlast (cl-remove-duplicates (append text '(": ")) :test 'string=)) " or ")) (message "%s: %s" word (string-clean-whitespace text)))) (kill-buffer)))))My

.emacs.ddirectory is a graveyard of hundred other procedures, that once served a purpose and strangely reminds me of a time, when I did not have possesion of the many tools that are now available at my disposal, but had a curiousity and enthusiasm of a much stronger magnitude that what I currently possess.It feels wrong to be nostalgic about something as living as Emacs: surely there should be an active community right now as there was back then. There’s no way I’d know that right now, especially when I haven’t logged in on IRC for years now.

-

When do you actually 'know' a language?

Or how I am no different from a monoglot despite speaking many languages.

It is actually very easy to learn languages at the surface level. Being able to order food, ask for the directions, and greet the people of the country you just arrived in, all takes a maximum of few days.

Before the era of multiculturalism, a second language was only learnt only by diplomats, literati, traders and people with mixed origin. After the advent of globalisation people are expected to atleast learn 2 or more languages: the lingua franca, the mother tongue and the language of the land they reside in.

Usual language proficiency scores are only useful for the first case: to be able to get your work done or to understand that which was written in a different language. What actually is the metric for practical purposes then?

You hear from a lot of people that travelling makes you realise that you’ve been living under a bubble and there’s actually a lot of things that you’re yet to learn. The realisations and their intensities are a very subjective thing depends on your worldview and how you grow up.

To me, one thing I learnt was the reality check with regard to my verbal communication, especially in English. On one side, I found that I was able to comprehend what the other person says to me regardless of how unusual or thick their accent was.

This might seem trivial, but I’ve come to notice that a lot of non-native speakers have difficulties understanding accents from the regions that aren’t theirs—the average chinese or the vietnamese has trouble understanding english spoken by a non-chinese or non-vietnamese.

There is however a different level of language proficiency that is beyond comprehending or being able to speak accents: being able to express your thoughts freely in the same way you’d do in your native language. And I’ve come to realise that this appears impossible to me or very hard to attain if it isn’t.

Who do you usually have deep/long conversations with? Family? Friends? In my case both of those had the same native language as me. Why is it that all my close friends were only those that primarily spoke tamil? Is it simply by chance or was it because I was unable to express myself to those that didn’t speak Tamil?

Even when I hang out with two strangers (of different ethnicities) that don’t know each other, they seem to enjoy each other’s company more when they both have the same native language.

Is language a more important factor than culture and ethnicity in truly knowing someone? How do I bridge that gap between my understanding of a language and that of a native’s? Will I ever be able to express myself in a language that isn’t mine?